Training a Reasoning Model on Consumer Hardware with GRPO and vLLM

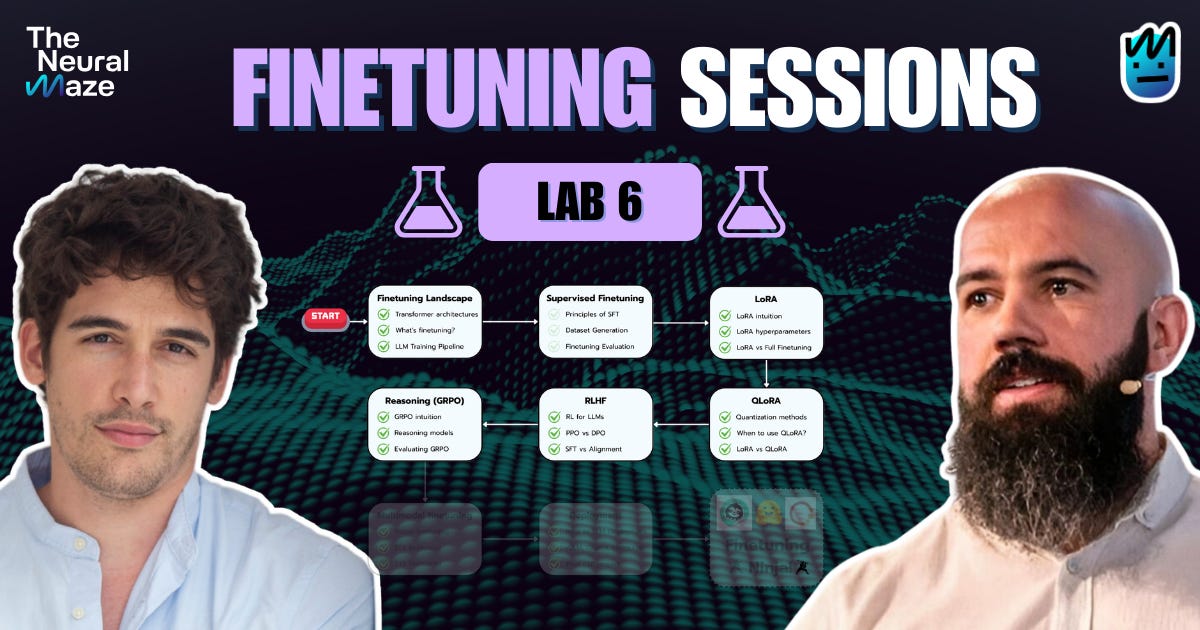

Finetuning Sessions · Lab 6 / 8

In today's lab, we're moving from theory to the terminal.

We are stepping onto the reasoning alignment frontier with a hands-on Group Relative Policy Optimization (GRPO) experiment.

If you haven't read our recent deep dive on the GRPO architecture and its modern variants, make sure to review it before going forward!

The shift from traditional reinforcement learning methods like PPO to GRPO isn't just a minor algorithmic tweak; it's a fundamental rethinking of the computational cost required to teach large language models how to reason.

While PPO taught us how to powerfully optimize models, it relies on a glaring assumption: that you have the massive VRAM budget required to keep four active models loaded simultaneously—especially the heavy value model (the "critic").

GRPO attacks the compute bottleneck of alignment, allowing us to completely drop the critic model and instead score a group of responses relative to each other. This slashes memory overhead drastically, meaning we can now train true reasoning models directly on consumer hardware.

💻 Today, we are going deep into the implementation.

We will walk through a live training job using Unsloth, analyzing how to break the slow generation bottleneck by integrating vLLM directly into the fine-tuning stack to prevent double memory usage and massively accelerate throughput.

We'll also tackle the realities of Reinforcement Learning with Verifiable Rewards (RLVR)—specifically how to prevent models from "reward hacking" through length-biased rambling—by designing strict, deterministic Python reward functions.

By the end of this lab, you'll have a first-principles understanding of how to orchestrate your own reasoning model training locally, proving that you don't need a massive GPU cluster to push the boundaries of AI alignment.